The embedded world is so extremely flexible, because it is full of open standards. We therefore expect that big processor vendors will push harder than Google can push back. OpenCL-support is very important for GPGPU-libraries like ArrayFire, VexCL, ViennaCL – these can be ported to Android in less time.

Apple now has introduced Metal on iOS to increase the fragmentation even more. StreamHPC and friends are working hard on getting one language to have on all platforms, so we can build on bringing solutions to you. Understand that if OpenCL gets popular on Android, this increases the chance that it will get accepted on other mobile platforms like iOS and Windows Mobile/Phone.

On the other hand it is getting blocked wherever it can, as GPGPU brings unique apps. A RenderScript-only or Metal-only app is good for sales of one type of smartphone – good for them, bad for developers who want to target the whole market.

Getting the current status

To get more insight on the current situation, Pavan Yalamanchili of ArrayFire has created a spreadsheet (click here to edit yourself). It is publicly editable, so anybody can help complete it. Be clear about the version of Android you are running, as for instance in 4.4.4 there are possibly some blocks thrown up by Google. If you found drivers, but did not get OpenCL running, please put that in the notes. You can easily find out if your smartphone supports OpenCL, using this OpenCL-Info app. Thanks in advance of helping out!

Why not just RenderScript?

We think that RenderScript can be built on top of OpenCL. This helps allowing new programming languages and finding the optimal programming-solution faster than just trusting Google engineers – solving this problem is not about being smart, but about being open to more routes.

Same is for Metal, which even tries to replace both OpenCL and OpenGL. Again it is a higher level language which can be expressed in OpenGL and OpenCL.

Let’s see if Apple and Google serve their dedicated developers, or if we-the-developers must serve them. Let’s hope for the best.

Altera has just released the free ebook FPGAs for dummies. One part of the book is devoted to OpenCL, so we’ll quote some extracts here from one of the chapters. The rest of the book is worth a read, so if you want to check the rest of the text, just

Altera has just released the free ebook FPGAs for dummies. One part of the book is devoted to OpenCL, so we’ll quote some extracts here from one of the chapters. The rest of the book is worth a read, so if you want to check the rest of the text, just

There has been quite some “find OpenCL” code for CMake around. If you haven’t heard of CMake, it’s the most useful cross-platform tool to make cross-platform software.

There has been quite some “find OpenCL” code for CMake around. If you haven’t heard of CMake, it’s the most useful cross-platform tool to make cross-platform software.

A high-level language has been on OpenCL’s roadmap since the years, and would be started once the foundations were ready. Therefore with OpenCL 2.0, SYCL was born.

A high-level language has been on OpenCL’s roadmap since the years, and would be started once the foundations were ready. Therefore with OpenCL 2.0, SYCL was born.

This year we’re unfortunately not at SuperComputing 2015 for reasons you will hear later. But we haven’t forgotten about the people going and trying to find a share of OpenCL. Below is a list of companies having a booth at SC15, which was assembled by the guys of

This year we’re unfortunately not at SuperComputing 2015 for reasons you will hear later. But we haven’t forgotten about the people going and trying to find a share of OpenCL. Below is a list of companies having a booth at SC15, which was assembled by the guys of

Our expertise in parallel image processing is ideally suited for meeting the large computing demands in modern medical imaging. High-resolution microscopy images can easily take several GB in size, and high-content screening of microscopy data sets using classical software tools is extremely time-consuming. Using GPU-based parallel computing solutions, we can dramatically cut down processing and waiting times. For example, we have helped the Memorial Sloan Kettering Cancer Center by improving a tool they use daily. Where their analysis previously took one hour, it now takes just two minutes – a speed-up of 30x. Their productivity has gone up at virtually no extra cost as waiting for the results is significantly reduced, without the need to buy new computers.

Our expertise in parallel image processing is ideally suited for meeting the large computing demands in modern medical imaging. High-resolution microscopy images can easily take several GB in size, and high-content screening of microscopy data sets using classical software tools is extremely time-consuming. Using GPU-based parallel computing solutions, we can dramatically cut down processing and waiting times. For example, we have helped the Memorial Sloan Kettering Cancer Center by improving a tool they use daily. Where their analysis previously took one hour, it now takes just two minutes – a speed-up of 30x. Their productivity has gone up at virtually no extra cost as waiting for the results is significantly reduced, without the need to buy new computers. StreamHPC also has experience in high-performance implementations of

StreamHPC also has experience in high-performance implementations of  Computing demands in computer vision are high, and often real-time processing with low latency is desirable. Computer vision can greatly benefit from parallelization as higher processing speeds can improve object recognition rates while FPGA solutions may reduce energy demands or support the perception of lag-free processing. At StreamHPC, we have supported several customers in optimizing their software to work on a lower power budget and on a higher speed. We can support you in dedicated solutions based on GPUs or FPGAs to meet your demands.

Computing demands in computer vision are high, and often real-time processing with low latency is desirable. Computer vision can greatly benefit from parallelization as higher processing speeds can improve object recognition rates while FPGA solutions may reduce energy demands or support the perception of lag-free processing. At StreamHPC, we have supported several customers in optimizing their software to work on a lower power budget and on a higher speed. We can support you in dedicated solutions based on GPUs or FPGAs to meet your demands.

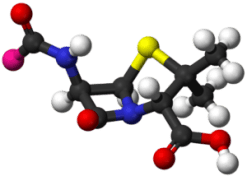

For the university of Stanford, we further optimised a part of TeraChem, a general purpose quantum chemistry software designed to run on NVIDIA GPU architectures. Our work resulted in adding an extra 70% performance to the already optimised CUDA code.

For the university of Stanford, we further optimised a part of TeraChem, a general purpose quantum chemistry software designed to run on NVIDIA GPU architectures. Our work resulted in adding an extra 70% performance to the already optimised CUDA code. For the University of Manchester, we developed a high-performance implementation of the UNIFAC group contribution model for their research on atmospheric aerosol particles. Where an OpenMP implementation of the original single-threaded code got the run time down from 32 to about 10 seconds on a quad-core CPU, we eventually brought it down to 0.062 seconds using OpenCL on a Xeon Phi accelerator – a speedup of 160x over OpenMP.

For the University of Manchester, we developed a high-performance implementation of the UNIFAC group contribution model for their research on atmospheric aerosol particles. Where an OpenMP implementation of the original single-threaded code got the run time down from 32 to about 10 seconds on a quad-core CPU, we eventually brought it down to 0.062 seconds using OpenCL on a Xeon Phi accelerator – a speedup of 160x over OpenMP.  Embedded is an industry often combined with

Embedded is an industry often combined with  Want to get an overview of what Heterogeneous Systems Architecture (HSA) does, or want to know what terminology has changed since version 1.0? Read further.

Want to get an overview of what Heterogeneous Systems Architecture (HSA) does, or want to know what terminology has changed since version 1.0? Read further.

Last year we bought

Last year we bought