Altera has just released the free ebook FPGAs for dummies. One part of the book is devoted to OpenCL, so we’ll quote some extracts here from one of the chapters. The rest of the book is worth a read, so if you want to check the rest of the text, just fill in the form on Altera’s webpage.

Altera has just released the free ebook FPGAs for dummies. One part of the book is devoted to OpenCL, so we’ll quote some extracts here from one of the chapters. The rest of the book is worth a read, so if you want to check the rest of the text, just fill in the form on Altera’s webpage.

In StreamHPC we’re interested in OpenCL on FPGAs for one reason: many companies run their software on GPUs, when they should be using FPGAs instead; and at the same time, others stick to FPGAs and ignore GPUs completely. The main reason, we think, is that converting CUDA to VHDL, or Verilog to CPU intrinsics, is simply too painful. Another reason can be seen in the a amount of investment put on a certain technology. We believe that OpenCL can solve both of these issues. OpenCL is much more portable and can be converted to a new architecture in a relatively short time (if the developer is familiar with the project, the hardware and OpenCL). We have high familiarity with these two latter, which means we’re used to get new projects up-and-running.

Since both Altera and Xilinx have invested in OpenCL, the two FPGAs code has become more portable now. Altera has a public SDK (and they’re proudly loud about it), while Xilinx offers it in their latest tools (although they’re unfortunately much more silent about it).

Now, let us now go back to the quotes from the book that we wanted to share with you.

Andrew Moore describes OpenCL effectively in just a few sentences:

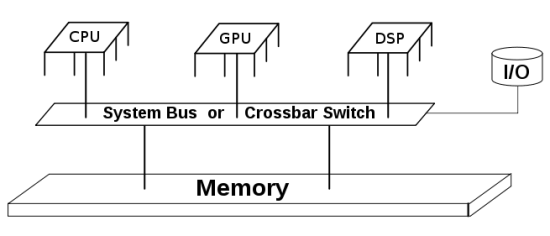

The need for heterogeneous computing is leading to new programming languages to exploit the new hardware. One example is the OpenCL first developed by Apple, Inc. OpenCL is a framework for writing programs that execute across heterogeneous platforms consisting of CPUs, GPUs, DSPs, FPGAs, and other types of processors. OpenCL includes a language for developing kernels (functions that execute on hardware devices) as well as application programming interfaces (APIs) that define and control the various platforms. OpenCL allows for parallel computing using task-based and data-based parallelism.

The author also shares some interesting insights around the reasons why OpenCL should be used on FPGA:

FPGAs are inherently parallel, so they’re a perfect fit with OpenCL’s parallel computing capabilities. FPGAs give you an alternative to the typical data or task parallelism by offering a pipeline parallelism where tasks can be spawned in a push-pull configuration with each task using different data from the previous task with or without host interaction. OpenCL allows you to develop your code in the familiar C programming language but using the additional capabilities provided by OpenCL. These kernels can be sent to the FPGAs without your having to learn the low-level HDL coding practices of FPGA designers. Generally, there are several benefits for software developers and system designers to use OpenCL to develop code for FPGAs:

- Simplicity and ease of development: Most software developers are familiar with the C programming language, but not low-level HDL languages. OpenCL keeps you at a higher level of programming, making your system open to more software developers.

- Code profiling: Using OpenCL, you can profile your code and determine the performance-sensitive pieces that could be hardware accelerated as kernels in an FPGA.

- Performance: Performance per watt is the ultimate goal of system design. Using an FPGA, you’re balancing high performance in an energy-efficient solution.

- Efficiency: The FPGA has a fine-grain parallelism architecture, and by using OpenCL you can generate only the logic you need to deliver one fifth of the power of the hardware alternatives.

- Heterogeneous systems: With OpenCL, you can develop kernels that target FPGAs, CPUs, GPUs, and DSPs seamlessly to give you a truly heterogeneous system design.

- Code reuse: The holy grail of software development is achieving code reuse. Code reuse is often an elusive goal for software developers and system designers. OpenCL kernels allow for portable code that you can target for different families and generations of FPGAs from one project to the next, extending the life of your code.

Today, OpenCL is developed and maintained by the technology consortium Khronos Group. Most FPGA manufacturers provide Software Development Kits (SDKs) for OpenCL development on FPGAs.

You can continue here if you want to read of this ebook. And of course, whenever you want to learn some more more, feel free to write to us, or follow this conversation on Twitter, which goes on through our special account: @OpenCLonFPGAs.

When copying data from global to local memory, you often see code like below (1D data):

When copying data from global to local memory, you often see code like below (1D data):

So, for this year it will be K40. Here’s an overview:

So, for this year it will be K40. Here’s an overview:

At Intel they have CPUs (Xeon, Ivy Bridge), GPUs (Isis) and Accelerators (Xeon Phi). OpenCL enables each processor to be used to the fullest and they now promote it as such. Watch the below video and see their view on why OpenCL makes a difference for Intel’s customers.

At Intel they have CPUs (Xeon, Ivy Bridge), GPUs (Isis) and Accelerators (Xeon Phi). OpenCL enables each processor to be used to the fullest and they now promote it as such. Watch the below video and see their view on why OpenCL makes a difference for Intel’s customers.

Khronos just announced three OpenCL based releases:

Khronos just announced three OpenCL based releases:

On 15 – 17 April 2014 a 3-day workshop around HPC is organised. It is free, and focuses on bringing industry and academy together.

On 15 – 17 April 2014 a 3-day workshop around HPC is organised. It is free, and focuses on bringing industry and academy together.

According to the

According to the

DigitalFilmTools

DigitalFilmTools